Every wave of innovation in artificial intelligence (AI) brings real technological progress, along with a dramatic rise in hype. With every breakthrough, new narratives emerge: AI is portrayed as “magical,” endowed with its own will, on the verge of becoming superhuman, or conversely as something completely uncontrollable by law.

As a result, this fog of myths makes AI opaque to the public, complicates decision-making for organizations, and distracts attention from the real technical and societal challenges.

In this article, we aim to clarify two key questions:

-

What are the main myths currently surrounding AI?

-

And what technical, physical, and social realities help dismantle them?

The Major Myths Shaping Our View of AI

Several myths structure today’s collective imagination about artificial intelligence.

“AI has agency.”

The idea that AI systems act on their own initiative, with intentions, goals, or desires.

“Superintelligence is imminent.”

The belief that we are only a few years, or even months, away from a general intelligence far surpassing human capabilities.

“AI can be objective or impartial.”

The assumption that algorithms are inherently neutral because they rely on computation.

“AI has a clear definition.”

As if AI referred to a single, clearly defined technology, when in reality no universal definition exists.

“Ethical guidelines are enough to protect us.”

The perception that voluntary ethical charters are sufficient safeguards against harmful AI uses.

“AI cannot be regulated.”

The claim that technological innovation moves too fast for legal systems to keep up.

“AI can solve any problem.”

The idea that AI is a universal solution applicable to any technical, economic, or social challenge.

In reality, these myths stem from a mixture of marketing, science fiction, and technical misunderstanding. To move beyond them, we need to return to what AI actually is today.

1. Agency and Consciousness: AI as a “Stochastic Parrot”

One of the most common misconceptions is attributing intention to AI. We often talk about what AI “wants,” “decides,” or “thinks.” Yet modern systems, especially large language models (LLMs), function much more simply.

Models That Predict, Not Understand

An LLM does not interpret your sentences in the human sense. Technically, it:

-

receives a sequence of tokens (pieces of words) as input

-

computes a probability distribution over the next token using a trained neural network

-

selects or samples the next token according to this distribution

-

repeats the process until a complete response is produced

This mechanism relies on massive statistical correlations learned during training. At no point does the system possess:

-

semantic understanding of concepts

-

an internal model of the world comparable to a human’s

-

independent intentions or goals

In other words, what researchers sometimes call a “stochastic parrot”: a machine that reproduces learned language structures in sophisticated probabilistic combinations.

Anthropomorphism as a Persistent Bias

If these systems appear to “think,” it is largely because humans naturally anthropomorphize systems that display seemingly intelligent behavior. This cognitive bias is central to many misunderstandings about AI today.

2. Superintelligence and the Resource Wall

Another dominant narrative suggests that we are on the verge of general superintelligence, held back only by corporate caution. However, the actual infrastructure behind AI tells a different story.

The Data Wall: A Finite Resource

Today’s large models rely on enormous volumes of high-quality human-generated data: text, conversations, code, and multimedia content. But this resource is not infinite.

Estimates suggest that high-quality training data suitable for ever-larger models could be largely exhausted between 2026 and 2032. Beyond that point:

-

existing datasets would be reused repeatedly, yielding limited improvements

-

or synthetic data would be used, introducing new risks and feedback loops

Physical Constraints and Diminishing Returns

The idea of unlimited growth in model power faces several practical limits.

Energy and cooling constraints

The computing density required for training and deploying the largest models pushes data centers toward limits in:

Hardware limits

GPUs and other accelerators are approaching physical limits in terms of performance per watt and cost efficiency.

Diminishing returns

Scaling models by increasing parameters, data, or compute still improves performance, but each additional gain becomes smaller relative to the resources invested.

These “resource walls” do not prevent progress, but they challenge the idea of a straightforward path toward limitless superintelligence.

3. Objectivity and Impartiality: AI as a Mirror of Human Bias

AI is often presented as a way to eliminate human bias. In reality, AI systems frequently inherit and sometimes amplify existing inequalities.

Data Bias: Who Is Represented?

Models can only generalize effectively if training data represent a sufficiently diverse set of situations and populations.

When datasets are imbalanced, performance degrades unevenly. Studies have shown, for instance, that some facial recognition systems exhibit error rates up to 35% higher for darker-skinned women than for white men.

This is not an isolated bug. It reflects underlying representation biases in the data.

Design Bias: Optimization Choices Matter

Even with balanced datasets, models reflect the priorities of their designers:

-

How is overall accuracy balanced against fairness between groups?

-

Which metrics are optimized during training and deployment?

-

What trade-offs are accepted between false positives and false negatives?

These decisions directly shape who benefits from an AI system and who may be harmed. Claims of algorithmic objectivity often overlook these design choices.

4. The Plural Architecture of AI

Contrary to popular belief, “artificial intelligence” does not describe a single unified technology. Instead, it is an umbrella term covering a broad and heterogeneous set of methods, theories, and applications.

A Hierarchy of Often-Confused Concepts

Many people use AI, Machine Learning, and Deep Learning interchangeably, although they represent different levels of abstraction.

Artificial Intelligence (AI)

The broader field of computer science focused on creating systems capable of performing tasks that require human-like cognitive abilities.

Machine Learning (ML)

A subset of AI in which systems learn patterns from data rather than relying solely on explicit programming.

Deep Learning (DL)

A specialized ML approach using multi-layer neural networks to process complex data such as images, speech, or language.

Divergent Definitions

The meaning of AI changes depending on perspective.

-

Scientific definition: a research discipline exploring computational models of cognition.

-

Technological definition: systems capable of perceiving their environment and taking actions accordingly.

-

Popular definition: a largely anthropomorphic vision attributing awareness or autonomy to machines.

A Fragmented Ecosystem

AI is not monolithic. It includes multiple research traditions and technical approaches.

Two historical families illustrate this diversity:

Symbolic AI

Systems based on logical rules and expert knowledge.

Connectionist AI

Statistical approaches based on large datasets and neural networks, including modern language models.

Narrow AI vs General AI

Today’s systems belong entirely to narrow AI, designed to perform specific tasks such as:

Artificial General Intelligence (AGI), capable of learning any intellectual task a human can perform, remains a speculative concept.

5. Ethics, Marketing, and the Need for Regulation

In response to AI risks, many organizations have adopted ethical charters and voluntary guidelines. While useful, these tools have clear limitations.

Ethical Marketing

Without enforcement mechanisms, many ethical charters function more as reputation tools:

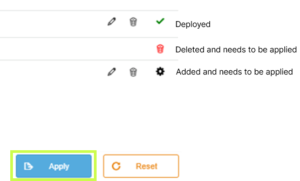

Toward Enforceable Regulation: The EU AI Act

Contrary to the myth that AI cannot be governed, regulatory frameworks are emerging.

The European Union’s AI Act proposes a risk-based approach:

-

Unacceptable risk systems are banned

-

High-risk systems must comply with strict requirements including transparency, traceability, documentation, conformity assessments, and human oversight

-

Minimal risk systems face limited regulation

The goal is not to slow innovation, but to ensure that AI systems remain accountable within existing legal frameworks.

6. AI Is Not a Magic Wand

Perhaps the most persistent myth is that AI can solve any problem.

In reality, successful AI systems are:

-

specialized, designed for specific tasks such as image recognition, text summarization, fraud detection, or code generation

-

limited in common sense, often failing when faced with situations outside their training distribution

-

highly context-dependent, relying on data quality, system integration, and human oversight

The same model may perform extremely well in a well-defined environment yet fail dramatically when conditions change or when real-world usage diverges from intended scenarios.

AI as a Component, Not a Strategy

For organizations, AI should be viewed as:

-

a technical component within a larger system architecture

-

integrated into a broader strategy involving governance, metrics, risk management, and human supervision

The wrong question is:

“How can we add AI everywhere?”

The better question is:

“On which well-defined problems does AI provide a real advantage compared to existing solutions?”

Moving Beyond the Myths

Today’s AI is neither a conscious entity, nor an imminent superintelligence, nor a universal solution.

It is a set of powerful techniques deeply grounded in real-world constraints. These systems are limited by physical infrastructure such as energy, cooling, and hardware, as well as by the availability of data and computational resources. They are also shaped by the social structures and human biases embedded in the data and objectives guiding their development.

By dismantling the myths surrounding AI, autonomous agency, imminent superintelligence, perfect objectivity, legal ungovernability, or universal applicability, we can ask better technical questions, design safer systems, and build more effective regulatory frameworks.

Ultimately, understanding these realities allows us to treat AI for what it truly is: a powerful but specialized tool that must be used with rigor, transparency, and human oversight.

If you have questions about AI and its practical applications, our experts are here to help. Contact us to start the conversation.